Python pyodbc Unicode issue

Solution 1

This is something I think is best handled with Python, instead of fiddling with pyodbc.connect arguments and driver-specific connection string attributes.

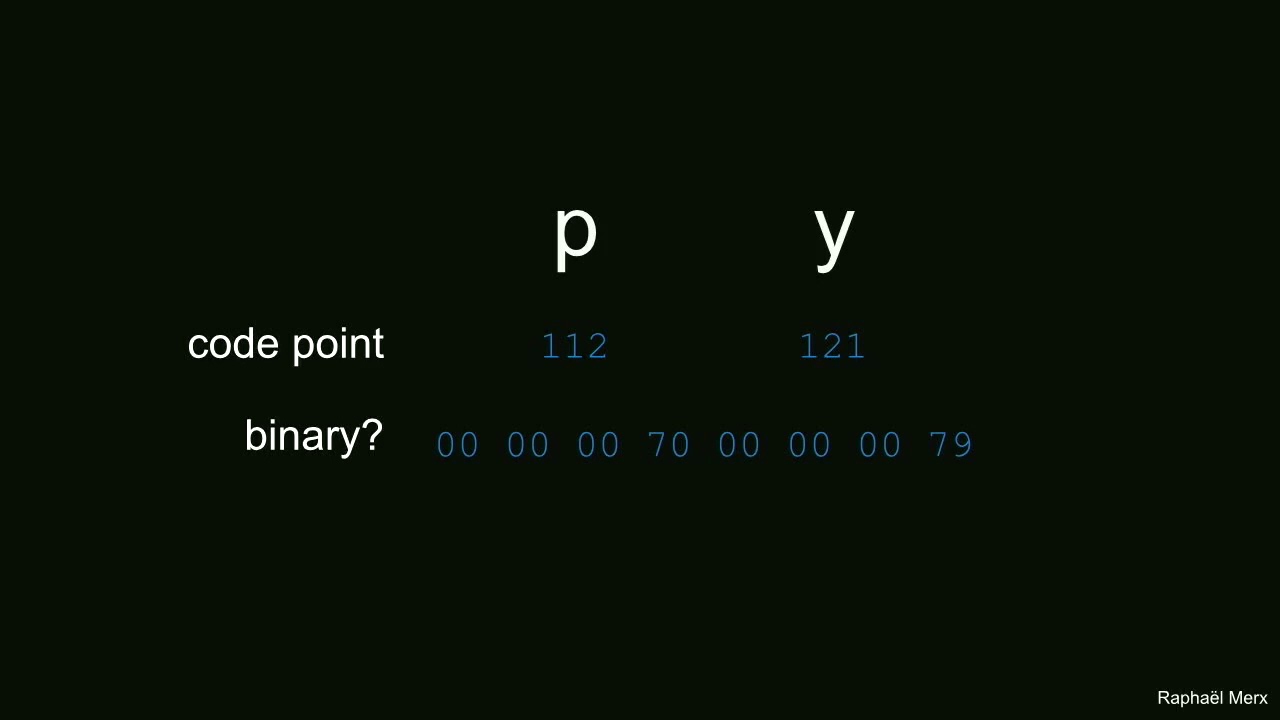

'\xe4' is a Latin-1 encoded string representing the unicode ä character.

To explicitly decode the pyodbc result in Python 2.7:

>>> res = '\xe4'

>>> res.decode('latin1'), type(res.decode('latin1'))

(u'\xe4', <type 'unicode'>)

>>> print res.decode('latin1')

ä

Python 3.x does this for you (the str type includes unicode characters):

>>> res = '\xe4'

>>> res, type(res)

('ä', <class 'str'>)

>>> print(res)

ä

Solution 2

For Python 3, try this:

After conn = pyodbc.connect(DSN='datbase',ansi=True,autocommit=True)

Place this:

conn.setdecoding(pyodbc.SQL_CHAR, encoding='utf8')

conn.setdecoding(pyodbc.SQL_WCHAR, encoding='utf8')

conn.setencoding(encoding='utf8')

or

conn.setdecoding(pyodbc.SQL_CHAR, encoding='iso-8859-1')

conn.setdecoding(pyodbc.SQL_WCHAR, encoding='iso-8859-1')

conn.setencoding(encoding='iso-8859-1')

etc...

Python 2:

cnxn.setdecoding(pyodbc.SQL_CHAR, encoding='utf-8')

cnxn.setdecoding(pyodbc.SQL_WCHAR, encoding='utf-8')

cnxn.setencoding(str, encoding='utf-8')

cnxn.setencoding(unicode, encoding='utf-8')

etc...

cnxn.setdecoding(pyodbc.SQL_CHAR, encoding='encode-foo-bar')

cnxn.setdecoding(pyodbc.SQL_WCHAR, encoding='encode-foo-bar')

cnxn.setencoding(str, encoding='encode-foo-bar')

cnxn.setencoding(unicode, encoding='encode-foo-bar')

Related videos on Youtube

rogue-one

BY DAY: Alt-Rock Ninja Cowgirl at Veridian Dynamics. BY NIGHT: I write code and code rights for penalcoders.org, an awesome non-profit that will totally take your money at that link. My kids are cuter than yours. FOR FUN: C+ Jokes, Segway Roller Derby, NYT Sat. Crosswords (in Sharpie!), Ostrich Grooming. "If you see scary things, look for the helpers-you'll always see people helping."-Fred Rogers

Updated on September 14, 2022Comments

-

rogue-one 3 months

rogue-one 3 monthsI have a string variable res which I have derived from a pyodbc cursor as shown in the bottom. The table

testhas a single row with dataäwhose unicode codepoint isu'\xe4'.The Result I get is

>>> res,type(res) ('\xe4', <type 'str'>)Whereas the result I should have got is.

>>> res,type(res) (u'\xe4', <type 'unicode'>)I tried adding charset as utf-8 to my pyodbc connect string as shown below. The result was now correctly set as a unicode but the codepoint was for someother string

꓃which could be due to a possible bug in the pyodbc driver.conn = pyodbc.connect(DSN='datbase;charset=utf8',ansi=True,autocommit=True) >>> res,type(res) (u'\ua4c3', <type 'unicode'>)Actual code

import pyodbc pyodbc.pooling=False conn = pyodbc.connect(DSN='datbase',ansi=True,autocommit=True) cursor = conn.cursor() cur = cursor.execute('SELECT col1 from test') res = cur.fetchall()[0][0] print(res)Additional details Database: Teradata pyodbc version: 2.7

So How do I now either

1) cast

('\xe4', <type 'str'>)to(u'\xe4', <type 'unicode'>)(is it possible to do this without unintentional side-effects?)2) resolve the pyodbc/unixodbc issue

-

rogue-one over 7 yearsThanks @Bryan I will try this out today.

rogue-one over 7 yearsThanks @Bryan I will try this out today. -

rogue-one over 7 yearsThanks it works as expected. however our requirement is to support Asian characters too, so I am going with JayDeBeApi which uses JDBC via jpype.

rogue-one over 7 yearsThanks it works as expected. however our requirement is to support Asian characters too, so I am going with JayDeBeApi which uses JDBC via jpype. -

Gord Thompson almost 5 yearsThanks for posting an update relevant to current versions of pyodbc (4.x and up). More details are available at the pyodbc Wiki.

Gord Thompson almost 5 yearsThanks for posting an update relevant to current versions of pyodbc (4.x and up). More details are available at the pyodbc Wiki. -

Captain Jack Sparrow over 2 yearsThank you! This was helpful for me using Python3 and MySQL ODBC 8.0 ANSI Driver on MacOS.

Captain Jack Sparrow over 2 yearsThank you! This was helpful for me using Python3 and MySQL ODBC 8.0 ANSI Driver on MacOS.