Why do I have to manually 'Restart Management Network' on vSphere 5 host after reboot to get networking available?

Solution 1

Check that nothing else is sitting on the IP Address allocated to the management network. On boot if it detects something else on that IP address it will not fire up.

Solution 2

What kind of NICs are you using? Have you taken a look if these NICs are listed in the VMWare Compatibility Guide?

http://www.vmware.com/resources/compatibility/search.php (In "What are you looking for" click "IO Devices")

Also, in your network configuration in vSphere the load balancing property must be set to Route based on ip hash

Related videos on Youtube

growse

Updated on September 18, 2022Comments

-

growse over 1 year

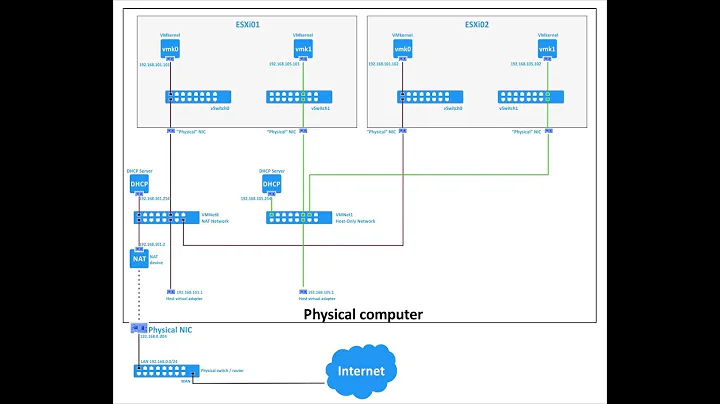

growse over 1 yearI've got a couple of vSphere 5.0 hosts in a small lab environment here and I've noticed a strange behaviour. When on of the hosts gets rebooted, it is unresponsive to the network until I log into the ESX console, Press

F2to customize and selectRestart management network. Once this is done, the networking works perfectly as expected.Each host has two NICs which are trunked together using Etherchannel to a Cisco 3750. The link is also a .1q VLAN trunk and the management network is configured on VLAN121 with the VM traffic configured on VLAN118.

Why would the host be completely dead to the world until I physically kick it?

Edit

Sample switch config for trunk:

interface Port-channel2 description Blade 1 EtherChannel Trunk switchport trunk encapsulation dot1q switchport mode trunk end ! ! interface GigabitEthernet4/0/1 description Bladecenter1 CPM 1A switchport trunk encapsulation dot1q switchport mode trunk speed 1000 duplex full channel-group 2 mode on endVswitch teaming settings:

Management port group settings:

-

growse over 12 yearsI've just noticed that the Mgmt network port group has an MTU of 1500, whereas the Port-Channel interface has an MTU of 9000. Is it possible this is related?

growse over 12 yearsI've just noticed that the Mgmt network port group has an MTU of 1500, whereas the Port-Channel interface has an MTU of 9000. Is it possible this is related? -

Chopper3 over 12 yearsPossibly, be interesting to see the status of the ports/trunk from the cisco side before and after the reset. BTW I've never seen this kind of problem before and we have many, many host - hence my interest

-

growse over 12 yearsGive me a few hours and I'll schedule a reboot to replicate to see what the switch does. I guess that the switchports come back up as 'OK', but will have to test to confirm.

growse over 12 yearsGive me a few hours and I'll schedule a reboot to replicate to see what the switch does. I guess that the switchports come back up as 'OK', but will have to test to confirm. -

gusya59 over 12 years@growse MTU 9000 is the default for jumbo frames. Port-Chans presumably has Jumbo Frames enabled hence why you are seeing this. Personally, I'd set both at the same frame size. Also, the switches must be configured to use Jumbo Frames. This can be one of the reasons why it 'crashes' until a manual restart.

-

gusya59 over 12 yearsCan't seem to edit the above but would also like to add, Jumbo Frames are best used for vMotion transfers to decrease transfer time. So technically if the VMkernal is using the same group as shown in the screenshot, you would definitely benefit by enabling Jumbo Frames. Different MTU on different port groups should not cause an issue.

-

growse over 12 yearsI've got jumbo on the VMkernel for vMotion, but the management is set at 1500.

growse over 12 yearsI've got jumbo on the VMkernel for vMotion, but the management is set at 1500. -

Jonathan over 11 yearsDid you ever solve this? having the same problem with esx5.1 on a Dell Blade and using Dell 8024k Switches. Either shutting one LAG member port or the LAG down helps. Restarting management network does not help.

-

-

growse over 12 yearsNICs are Broadcom NetXtreme II BCM5708 1000Base-SX - they were the default in the IBM HS21 blades that I'm using and I believe they're listed on the guide. Nic Teaming Load balancing setting is also set to

growse over 12 yearsNICs are Broadcom NetXtreme II BCM5708 1000Base-SX - they were the default in the IBM HS21 blades that I'm using and I believe they're listed on the guide. Nic Teaming Load balancing setting is also set toRoute based on IP hashfor all hosts. -

duenni over 12 yearsWhat happens if you reboot with only one cable from the trunk attached to the switch?

-

duenni over 12 yearsI don't know Cisco but just to be sure: ESXi (or VMWare in general) only supports static link aggregation. Is the aggregation static or dynamic on your Cisco 3750?

-

growse over 12 yearsI've added switch configuration to the question. Trunks are configured statically (I believe) with Etherchannel, but not LACP.

growse over 12 yearsI've added switch configuration to the question. Trunks are configured statically (I believe) with Etherchannel, but not LACP.