How to monitor the Pacemaker cluster using a script?

Instead of trying to modify the Dummy RA to execute arbitrary scripts, you could instead look at using the anything resource-agent.

# pcs resource describe ocf:heartbeat:anything

ocf:heartbeat:anything - Manages an arbitrary service

This is a generic OCF RA to manage almost anything.

Resource options:

binfile (required): The full name of the binary to be executed.

This is expected to keep running with the

same pid and not just do something and

exit.

cmdline_options: Command line options to pass to the binary

workdir: The path from where the binfile will be executed.

pidfile: File to read/write the PID from/to.

logfile: File to write STDOUT to

errlogfile: File to write STDERR to

user: User to run the command as

monitor_hook: Command to run in monitor operation

stop_timeout: In the stop operation: Seconds to wait for kill

-TERM to succeed before sending kill -SIGKILL.

Defaults to 2/3 of the stop operation timeout.

You would point the anything agent at your script as the binfile= parameter, then, if you have some way of monitoring your custom application other than checking for a running pid (that's what the anything agent does by default), you can define that in the monitor_hook parameter.

Related videos on Youtube

Vinod

Updated on September 18, 2022Comments

-

Vinod almost 2 years

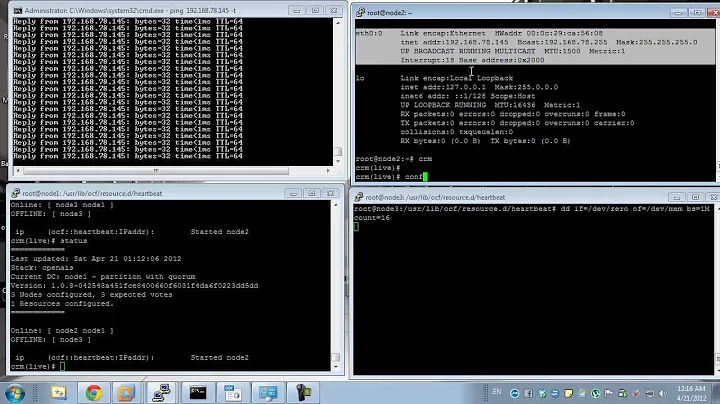

I have created a two node cluster (both nodes RHEL 7) using

pacemaker. It is used to run a custom application. I have created below resources and assigned it to the cluster:- A shared storage for application data

- A virtual IP

It works perfectly fine.

Now, we have a requirement. Currently the failover happens only if something goes wrong with the entire server. Pacemaker is unaware of the status of the application running on the active node and completely ignores it. We have a shell script that is able to run a health check on the application and returns true/false values based on the health of the application.

Can anyone please suggest me how to configure pacemaker to use this shell script to regularly check status of the application on the active node of the cluster and initiate failover if script returns a false value.I have seen examples, in webserver clusters people create a sample html page and use this (

http://127.0.0.1/samplepage.html) as a resource with pacemaker to check the health of apache webserver in active node.Please guide me how to achieve similar result using a shell script.

Update:

Here is my configuration:

[root@node1 ~]# pcs status Cluster name: webspheremq Stack: corosync Current DC: node1 (version 1.1.15-11.el7-e174ec8) - partition with quorum Last updated: Wed Jun 14 20:38:48 2017 Last change: Tue Jun 13 20:04:58 2017 by root via crm_attribute on svdg-stg29 2 nodes and 3 resources configured: 2 resources DISABLED and 0 BLOCKED from being started due to failures Online: [ node1 node2 ] Full list of resources: Resource Group: websphere websphere_fs (ocf::heartbeat:Filesystem): Started node1 websphere_vip (ocf::heartbeat:IPaddr2): Started node1 FailOverScript (ocf::heartbeat:Dummy): Started node1 Daemon Status: corosync: active/enabled pacemaker: active/enabled pcsd: active/enabledTo start and stop the application, I have two shell scripts. During failover, I would need

stop.shto run in the node from which resources will be moved andstart.shto run in the node to which cluster is failing over.I did little experiment and found that people are using dummy resource to achieve this kind of requirements (to execute scripts during failover).

So here is what I have done so far:

I created a dummy resource (

FailOverScript) for testing application start/stop scripts like below:[root@node1 tmp]# pcs status resources Resource Group: websphere websphere_fs (ocf::heartbeat:Filesystem): Started node1 websphere_vip (ocf::heartbeat:IPaddr2): Started node1 **FailOverScript (ocf::heartbeat:Dummy): Started node1**As of now, I included test scripts under start and stop actions of the resource FailOverScript. It should execute scripts failoverstartscript.sh and failoverstopscript.sh respectively when this dummy resource starts and stops.

[root@node1 heartbeat]# pwd /usr/lib/ocf/resource.d/heartbeat [root@node1 heartbeat]# [root@node1 heartbeat]# grep -A5 "start()" FailOverScript FailOverScript_start() { FailOverScript_monitor /usr/local/bin/failoverstartscript.sh if [ $? = $OCF_SUCCESS ]; then return $OCF_SUCCESS fi [root@node1 heartbeat]# [root@node1 heartbeat]# [root@node1 heartbeat]# grep -A5 "stop()" FailOverScript FailOverScript_stop() { FailOverScript_monitor /usr/local/bin/failoverstopscript.sh if [ $? = $OCF_SUCCESS ]; then rm ${OCF_RESKEY_state} fiBut when this dummy resource is started/stopped (through manual failover), the script does not execute. Tried different things but I am still unable to figure out the reason for this. Need some help to find the reason for the scripts not to execute automatically during failover.

-

Matt Kereczman about 7 yearsCan you share your configuration?

Matt Kereczman about 7 yearsCan you share your configuration? -

Matt Kereczman about 7 years... and how you're starting your application? Ideally, you would configure your application in Pacemaker, which would allow Pacemaker to monitor the application AND the node.

Matt Kereczman about 7 years... and how you're starting your application? Ideally, you would configure your application in Pacemaker, which would allow Pacemaker to monitor the application AND the node. -

Vinod about 7 years@MattKereczman I have added configuration details as update.

-

Matt Kereczman about 7 yearsThat's just the view of the running resources, I want to see how they're configured:

Matt Kereczman about 7 yearsThat's just the view of the running resources, I want to see how they're configured:# pcs cluster cib > /tmp/cib.xml -

Vinod about 7 yearsPlease find the requested file here: ge.tt/28wrZIl2

-

Matt Kereczman about 7 yearspasted here as well, I'll look in a little bit: pastebin.com/FgmNEBKz

Matt Kereczman about 7 yearspasted here as well, I'll look in a little bit: pastebin.com/FgmNEBKz -

Centimane over 6 years@Vinod You added the stubs for the

startandstopfunctions of yourFailOverScript, but what about themonitorfunction? It sounds like you want yourmonitorfunction to call the script that will give you status.

-

Vinod about 7 yearsThanks @Matt Kereczman . Sorry, I could not work on this till now. Will check this and update the outcome. Before that one query please. It looks like

anythingRA is missing in my setup. This is what I found in Redhat site link . -

Matt Kereczman about 7 yearsI wasn't aware they did that. I agree it's better to write a proper RA, but I'm not sure if I agree that using the 'systemd' unit file is any better or worse than the

Matt Kereczman about 7 yearsI wasn't aware they did that. I agree it's better to write a proper RA, but I'm not sure if I agree that using the 'systemd' unit file is any better or worse than theanythingagent. The implied assumption that anyone can sit down and write an RA is a little far fetched; to be fair RHEL is geared towards enterprise, so for their audience, that likely isn't an issue. However, if you're still interested in the agent, you can get it from upstream: github.com/ClusterLabs/resource-agents/blob/master/heartbeat/…